IT is common knowledge that Facebook (alongside big tech’s social media giants) is on the Congressional hot seat. Crosshairs. Their laser beam is shining from both sides of the political aisle, but for divergent calculi. No matter.

IN this respect, much of the public is aware that Zuckerberg has got a lot of ‘splainin’ to do – in so far that the largest social media site on the planet played fast and loose with its users’ privacy through MASSIVE scoop-ups of personal data, and then some. Effectively, over and over again, Facebook committed the biggest heists of private data in history! Indefensible. And as hard as Facebook’s highly compensated hired guns attempt to whitewash the illegal grabs, only those (in Congress, and within D.C. agencies) who stand to benefit from their ‘explanations’ will believe their dodging, weaving, and overall spin. Yes, how many times have they opined: “we’re committed to protecting people’s privacy.” Hogwash. Liars.

ON the other hand, fewer are aware of an equally nefarious breach, that is, one which allows Islam’s jihadists a platform to comfortably congregate, friend like-minded terrorists, message, plot, and ultimately inspire one another to wage global jihad.

IN fact, when exposed for their out-sized enabling in this bloody arena, once again, promises, promises were made from Facebook’s familiar mouthpieces. Of course, they were veddy, veddy sorry!

NOW, before we get to the latest ‘news’, it is incumbent to take a look-back to September 2016, three years to date. This is so because the sole book on the aforementioned ‘news’ made its blessed debut at AMAZON, as well as at every major bookseller.

MORE specifically, an entire chapter is dedicated to the charge-sheet at hand, and it couldn’t be any clearer – CHAPTER SEVEN: FACEBOOK’S COUNTERTERROR EFFORTS: CLOSING THE BARN DOOR AFTER THE HORSES HAVE ESCAPED reads like a running indictment against Facebook and its hired guns.

WHILE fifteen pages long, the following excerpts (page 100, 102) set the stage – as to why Congress is grilling Facebook today, and, more importantly, where the truth really lies.

Realistically speaking, Monica Bickert’s rare interview (along with the rest of Facebook’s heavy-hitters) should be deemed little more than a stepped-up rendering of a fable and fairytale, spun from a highly compensated hired gun. It is that transparent.

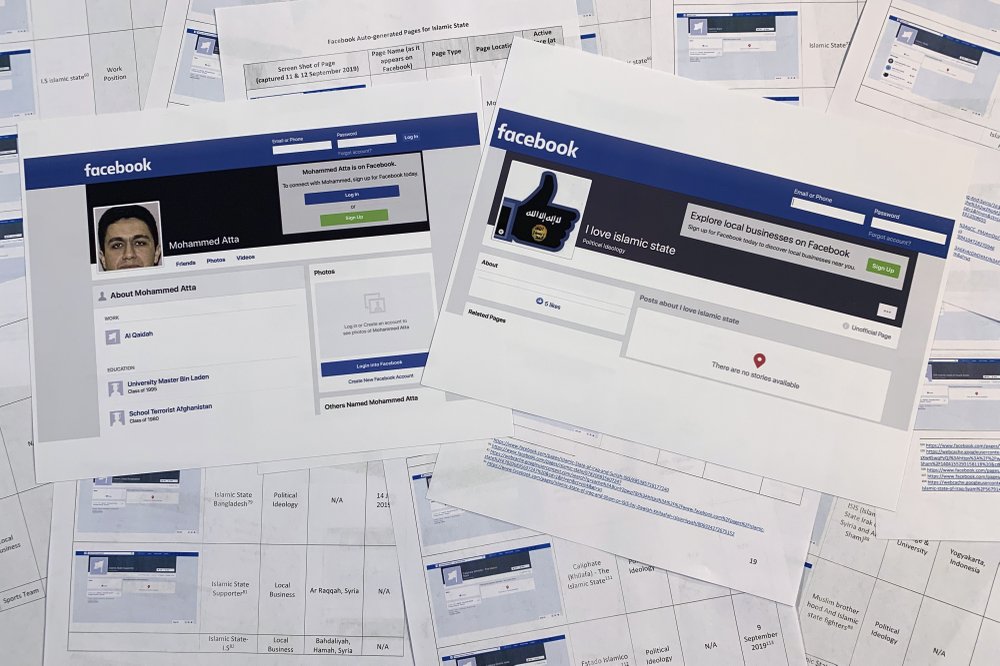

For all practical purposes, if any of Facebook’s associated spin is even remotely truthful and believable, how could it be that a logic-based, rational, and competent “Community Operations” department – overseen by a newly appointed “Counterterrorism Squad”, no less – mistook the following page (cited below) for the real (ISIS) thing? After all, a crucial part of due diligence mandates an across the board application of critical analysis……By the way, this is Counterterrorism 101…….

Then there’s the alleged (al-Qaeda/ISIS) gold/money laundering case we discussed in Chapter Two. How could it be that under their embarrassingly heralded “Community Operations” department, paradoxically, overseen by a newly appointed “Counterterrorism Squad”, they missed what has been right under their noses, hiding in plain sight?

Could it be that they are not as seriously interested in checking out what’s what on their pages, by those who may, or may not, operate so-called legitimate businesses? After all, it is a Counterterror Squad’s mandate to execute their due diligence and determine who’s who…..

DEAR reader, the evidence laid out within BANNED, in excruciating and bullet proof detail, is overwhelming – as to the site’s enabling of Islamic militant jihad!

LO and behold, since Facebook’s much ballyhooed counterterrorism roll-out (pinkie swearing to deal with terrorists congregating at their platform), how can it be, once again, they got away with another smoke and mirrors show – this time, a blood-soaked breach atop a MASSIVE data grab??

WASHINGTON (AP) — In the face of criticism that Facebook is not doing enough to combat extremist messaging, the company likes to say that its automated systems remove the vast majority of prohibited content glorifying the Islamic State group and al-Qaida before it’s reported.

But a whistleblower’s complaint shows that Facebook itself has inadvertently provided the two extremist groups with a networking and recruitment tool by producing dozens of pages in their names.

The social networking company appears to have made little progress on the issue in the four months since The Associated Press detailed how pages that Facebook auto-generates for businesses are aiding Middle East extremists and white supremacists in the United States.

On Wednesday, U.S. senators on the Committee on Commerce, Science, and Transportation will be questioning representatives from social media companies, including Monika Bickert, who heads Facebook’s efforts to stem extremist messaging.

The new details come from an update of a complaint to the Securities and Exchange Commission that the National Whistleblower Center plans to file this week. The filing obtained by the AP identifies almost 200 auto-generated pages — some for businesses, others for schools or other categories — that directly reference the Islamic State group and dozens more representing al-Qaida and other known groups. One page listed as a “political ideology” is titled “I love Islamic state.” It features an IS logo inside the outlines of Facebook’s famous thumbs-up icon.

In response to a request for comment, a Facebook spokesperson told the AP: “Our priority is detecting and removing content posted by people that violates our policy against dangerous individuals and organizations to stay ahead of bad actors. Auto-generated pages are not like normal Facebook pages as people can’t comment or post on them and we remove any that violate our policies. While we cannot catch every one, we remain vigilant in this effort.”

Facebook has a number of functions that auto-generate pages from content posted by users. The updated complaint scrutinizes one function that is meant to help business networking. It scrapes employment information from users’ pages to create pages for businesses. In this case, it may be helping the extremist groups because it allows users to like the pages, potentially providing a list of sympathizers for recruiters.

The new filing also found that users’ pages promoting extremist groups remain easy to find with simple searches using their names. They uncovered one page for “Mohammed Atta” with an iconic photo of one of the al-Qaida adherents, who was a hijacker in the Sept. 11 attacks. The page lists the user’s work as “Al Qaidah” and education as “University Master Bin Laden” and “School Terrorist Afghanistan.”

Facebook has been working to limit the spread of extremist material on its service, so far with mixed success. In March, it expanded its definition of prohibited content to include U.S. white nationalist and white separatist material as well as that from international extremist groups. It says it has banned 200 white supremacist organizations and 26 million pieces of content related to global extremist groups like IS and al-Qaida.

It also expanded its definition of terrorism to include not just acts of violence attended to achieve a political or ideological aim, but also attempts at violence, especially when aimed at civilians with the intent to coerce and intimidate. It’s unclear, though, how well enforcement works if the company is still having trouble ridding its platform of well-known extremist organizations’ supporters.

But as the report shows, plenty of material gets through the cracks — and gets auto-generated.

The AP story in May highlighted the auto-generation problem, but the new content identified in the report suggests that Facebook has not solved it.

The report also says that researchers found that many of the pages referenced in the AP report were removed more than six weeks later on June 25, the day before Bickert was questioned for another congressional hearing.

The issue was flagged in the initial SEC complaint filed by the center’s executive director, John Kostyack, that alleges the social media company has exaggerated its success combatting extremist messaging.

“Facebook would like us to believe that its magical algorithms are somehow scrubbing its website of extremist content,” Kostyack said. “Yet those very same algorithms are auto-generating pages with titles like ‘I Love Islamic State,’ which are ideal for terrorists to use for networking and recruiting.”

MESSAGE FAILED

- This message contains content that has been blocked by our security systems.

- If you think you’re seeing this by mistake, pleaselet us know. Yes, additional “proof-in-the pudding” as to why “BANNED: How Facebook Enables Militant